Calibration Services

A thermometer measures temperature. Clocks track time. Hygrometers monitor humidity, odometers measure distance, and ohmmeters verify electrical resistance. These familiar devices point to a much larger world of measurement tools used in manufacturing, laboratories, utilities, healthcare, aerospace, automotive production, and field service environments where dependable data supports quality, safety, and process control.

Meters, gauges, sensors, transducers, and testing instruments are calibrated against recognized standards so they can assign accurate quantitative values to the variables they measure. When buyers compare calibration companies, they are usually looking for repeatability, traceability, documented results, and dependable service that helps keep inspection equipment, production systems, and regulated processes operating within tolerance.

To maintain accuracy and consistency, measuring instruments need calibration and periodic recalibration. Over time, normal use, vibration, temperature swings, contamination, humidity, and transport can cause measurement drift that affects performance and confidence in the reading. Calibration services test, adjust, verify, and document equipment so instruments continue performing to specification in their intended application.

Calibration Services FAQ

Why is calibration important for measuring instruments?

Calibration helps measuring instruments deliver accurate, repeatable, and traceable readings. Without routine verification, devices can drift from their stated tolerance, which can affect product quality, workplace safety, documentation, and compliance in sectors such as aerospace, automotive, medical, electronics, food processing, and industrial manufacturing.

How often should equipment be recalibrated?

The recommended schedule is called the calibration interval, and it depends on the instrument type, frequency of use, risk tolerance, and operating environment. Equipment used in production, inspection, laboratories, or harsh field conditions often needs more frequent verification. If a device experiences shock, overload, contamination, or unusual temperature or humidity exposure, recalibration should be considered right away.

What types of calibration services are available?

Calibration services commonly include electrical calibration, dimensional calibration, mechanical calibration, pressure calibration, temperature calibration, flow calibration, and physical calibration. Providers may also handle pipettes, torque tools, load cells, sensors, gauges, balances, thermocouples, ovens, and machine systems, depending on the industries they serve and the standards they support.

Which industries rely most on calibration services?

Calibration services are widely used in automotive, aerospace, defense, electronics, energy, medical device production, laboratories, food and beverage processing, construction, utilities, and general manufacturing. Any operation that relies on accurate measurements for testing, inspection, process validation, or quality assurance benefits from a documented calibration program.

What devices commonly require calibration?

Common devices include load cells, strain gauges, weighing scales, balances, thermocouples, pressure gauges, torque wrenches, dimensional tools, data acquisition systems, lasers, vibration instruments, speedometers, environmental sensors, and process controllers. These instruments need dependable calibration to support precise measurement, troubleshooting, acceptance testing, and routine quality checks.

What role do ISO and IEC standards play in calibration?

ISO and IEC standards provide a recognized framework for calibration, measurement traceability, testing methods, and documentation. In practice, these standards help laboratories, service providers, and manufacturers align procedures, compare results, and maintain confidence that instruments are performing within accepted limits.

The History Of Calibration

The practice of measurement reaches back to the earliest civilizations, when people needed a dependable way to compare weight, distance, volume, and quantity for trade, construction, and taxation. Standardized measurement became the foundation for commerce because buyers and sellers needed a common reference point.

One of the earliest distance references was the cubit, based on the length from a man’s elbow or shoulder to the tip of his finger. It was useful, but because it varied from person to person, it also showed why shared measurement standards were needed.

During the reign of Henry I of England, the yard was described as the distance from the king’s nose to the tip of his outstretched thumb. While still tied to the human body, it represented another attempt to create a more uniform standard for trade and workmanship.

By the late twelfth and early thirteenth centuries, England had begun formalizing measures for length, wine, and beer. These efforts reflected a growing recognition that reliable standards support fair exchange, reduce disputes, and improve consistency across markets.

The invention of the mercury barometer in the seventeenth century opened the door to more refined pressure measurement. Instruments such as barometers and manometers expanded the ability to quantify atmospheric and process conditions with better repeatability.

The metric system emerged in France in the 1790s and became one of the most influential developments in measurement history. It introduced a rational, scalable framework that later evolved into the international standards used across science, industry, and global trade.

The term calibrate later came into wider use as industries demanded more exact relationships between a reference standard and the device being measured. Early applications were associated with matching dimensions and tolerances so equipment and components could perform predictably.

As manufacturing expanded, calibration became more closely tied to interchangeable parts, process control, and production quality. Consistent measurements allowed factories to reduce variation, improve fit, and support larger-scale industrial output.

By the twentieth century, measurement systems were modernized further, supporting the spread of international standards and more advanced quality practices. Laboratories, manufacturers, and inspection teams increasingly relied on traceable methods rather than informal comparisons.

Rising demands in transportation, energy efficiency, and environmental testing encouraged the development of more specialized instruments. As systems became more complex, calibration services also grew more specialized to support new technologies and tighter tolerances.

International standards organizations helped create a common language for calibration, verification, and testing. Their work made it easier for suppliers, laboratories, and manufacturers in different countries to compare results and align procedures.

The computer age accelerated precision measurement through CAD, automated inspection, GD&T, and digitally controlled manufacturing systems. These tools improved the way dimensions, tolerances, and measurement data were defined, communicated, and verified.

Today, calibration supports everything from industrial automation and medical devices to research laboratories and aerospace systems. As technology advances, the demand for accurate, repeatable, and traceable measurement continues to grow.

What is Calibration?

Metrology, the science of measurement, provides the standards that support trade, manufacturing, utilities, environmental monitoring, healthcare, and research. Its role goes far beyond the lab, because accurate measurement influences product quality, process efficiency, safety, and customer confidence across nearly every industry.

At its core, metrology involves defining a measurement unit, realizing that unit through instrumentation or reference standards, and maintaining traceability so results can be compared meaningfully across time and location.

A unit of measure may describe temperature, time, pressure, mass, flow, electrical resistance, distance, force, volume, or countless other variables. Once that unit is defined, it can be placed on a scale and compared with a recognized reference so the resulting measurement has meaning and consistency.

Calibration is the process of comparing an instrument or device under test to a known standard and documenting the difference. If the reading falls outside acceptable tolerance, the equipment may be adjusted, repaired, or flagged for corrective action so it can return to service with greater confidence.

Because measurement accuracy affects inspection results, product acceptance, and process decisions, the test equipment itself must be checked at planned intervals. That schedule is the calibration interval. Instruments exposed to heat, moisture, vibration, overload, contamination, or impact may require verification sooner to protect measurement integrity.

Calibration Devices

- Load Cells

- Transducers that convert applied force into an electrical signal that reflects deformation or load. In calibration work, a load cell is compared against a known reference so technicians can verify output, linearity, repeatability, and performance across the expected operating range.

- Because a load cell is often built into a larger machine or weighing system, inaccurate readings can affect downstream calculations, batching, safety limits, and quality data. Traceable calibration helps keep equipment and machinery operating as intended.

- Instrument Calibration

- Used to verify and adjust electronic measuring devices so they continue reporting accurate values. Typical candidates include balances, acoustic and vibration instruments, industrial ovens, controllers, lasers, process meters, and speedometers, all of which depend on stable signal measurement and documented tolerance control.

- Strain Gauges

- A sensing element that changes in response to force or deformation. Strain gauges are widely used in torque, pressure, force, and position sensing, and their performance is closely tied to the quality of the calibration process and the stability of the full measurement chain.

- Data Acquisition

- Sensors and data acquisition systems convert physical conditions into digital information that can be stored, monitored, and analyzed. Accurate calibration supports reliable data logging, automation, troubleshooting, and process validation while helping prevent overloaded systems, signal drift, and false readings.

Calibrating Through Sensors

Sensors measure sound, vibration, motion, position, acoustics, temperature, flow, and many other variables through devices such as geophones, microphones, hydrophones, and seismometers. Accurate calibration helps these systems produce dependable signals for monitoring, analysis, alarms, and closed-loop control.

- Chemical Sensors

- Chemical sensors detect the presence, concentration, or absence of substances in gases and liquids. Carbon monoxide detectors, gas monitors, and breath analyzers are familiar examples, and their calibration directly affects safety, alarm reliability, and process monitoring accuracy.

- Automotive Sensors

- Automotive sensors measure oil pressure, coolant temperature, wheel speed, crankshaft and camshaft position, fuel system values, air flow, and occupant safety conditions. Their calibration supports engine performance, emissions control, diagnostics, driver comfort, and vehicle safety systems.

- Proximity Sensors

- Proximity sensors detect presence, position, motion, or boundary changes. They are common in machine guarding, automation, security systems, vehicle applications, and moisture or sound monitoring, where dependable calibration helps reduce nuisance trips and missed detections.

Instruments that Calibrate

Environmental instruments measure humidity, moisture, air quality, air flow, temperature, soil conditions, tidal behavior, and related variables used in agriculture, utilities, environmental studies, and facility management. Regular calibration keeps these readings dependable for compliance, trending, and operational decisions.

Other instruments monitor radiation, ionization, and subatomic particles, including Geiger counters, radon detectors, dosimeters, and ionization chambers. In these applications, calibration supports safer monitoring and better confidence in exposure or detection results.

Navigational tools such as compasses, gyroscopes, and altimeters are sensitive instruments that benefit from frequent calibration so their readings remain dependable in field, marine, aviation, and survey use.

Gauges That Calibrate

- Optical Gauges

- Optical gauges and related sensors detect, translate, and transmit measurable levels of light, color, heat, or radiation into readable output. Examples include fiber-optic sensors, infrared sensors, LED sensors, photo switches, scintillators, and photon counters used in inspection, automation, and scientific applications.

- Temperature Gauges

- Temperature gauges range far beyond a simple liquid-in-glass thermometer. They may include bimetal devices, calorimeters, flame detectors, thermocouples, RTD-based systems, and pyrometers used to verify thermal conditions in manufacturing, laboratories, food processing, and process control.

Calibration Images, Diagrams and Visual Concepts

Calibration service aims to detect inaccuracies and uncertainties of measuring instruments or pieces of equipment.

Calibration service aims to detect inaccuracies and uncertainties of measuring instruments or pieces of equipment.

The International System of Units (SI system) is a standardized system of measurement, which goal is to communicate measurements precisely through a coherent and consistent expression of units describing the magnitudes of physical quantities.

The International System of Units (SI system) is a standardized system of measurement, which goal is to communicate measurements precisely through a coherent and consistent expression of units describing the magnitudes of physical quantities.

The goal of calibrating services are to minimize error and increase assurance of measurements.

The goal of calibrating services are to minimize error and increase assurance of measurements.

A universal calibrating machine main function is to calibrate compression type instruments.

A universal calibrating machine main function is to calibrate compression type instruments.

An example of a pressure calibrator which apply and control the pressure to a DUT.

An example of a pressure calibrator which apply and control the pressure to a DUT.

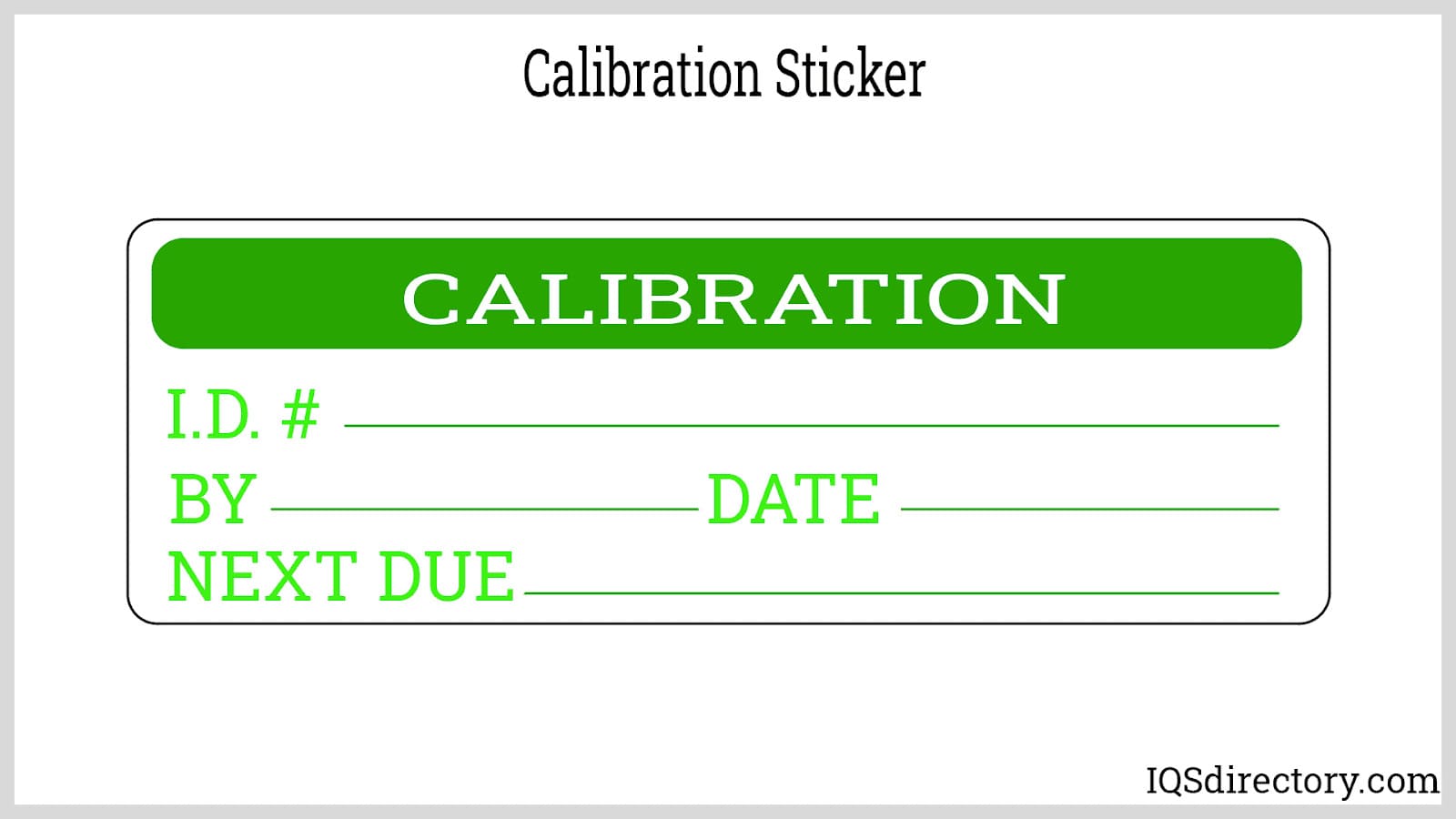

A calibration sticker is attached to the equipment for verification of the calibration, which usually indicates the equipment serial number and the date of the next calibration.

A calibration sticker is attached to the equipment for verification of the calibration, which usually indicates the equipment serial number and the date of the next calibration.

Types of Calibration

Calibration instruments may be handheld, portable, or fixed. Handheld tools are useful for in-house checks and field service work, portable units are designed to move to the equipment being tested, and fixed calibration stations are often selected when users want a controlled environment and the highest level of repeatability.

ISO and IEC standards help establish the framework for calibration methods, documentation, and measurement consistency. The exact procedure depends on the instrument, the process risk, the operating range, and the tolerance required by the application.

Calibration services may provide on-site work, laboratory testing, adjustment, repair coordination, certificates, and maintenance support for systems already in use. A good service provider can also explain the relevant standards, tolerances, turnaround expectations, and documentation requirements for the job.

- Electrical Calibration

- Electrical calibration verifies time, voltage, current, resistance, inductance, capacitance, radio frequency, and power measurements so electrical instruments continue operating within stated tolerances.

- Dimensional Calibration

- Dimensional calibration uses tools such as calipers, micrometers, dial indicators, gauge blocks, and comparators to verify physical dimensions and support close-tolerance measurement work.

- Mechanical Calibration

- Mechanical calibration evaluates force-related variables such as weight, tension, compression, and torque so mechanical instruments provide dependable readings during testing, assembly, and maintenance.

- Physical Calibration

- Physical calibration uses specialized equipment to measure temperature, pressure, humidity, vacuum, and related conditions in a controlled environment, often with hydraulic, pneumatic, dial, and digital reference systems.

- Equipment Calibration

- Equipment calibration is the broader process of adjusting and verifying instruments, machines, or test devices so they produce consistent and repeatable measurements.

- Hardness Tests

- Hardness testing evaluates material hardness and related strength characteristics, helping users understand resistance to deformation, wear, and mechanical loading in quality and material verification work.

- Machine Calibration

- Machine calibration adjusts production or test machinery to match established standards, supporting better process accuracy, part consistency, and reliable machine performance.

- Pipette Calibration

- Pipette calibration verifies that pipettes contain and dispense precise fluid volumes, which is especially important in laboratories, pharmaceutical work, biotechnology, and other applications where small volume errors can affect results.

- Torque Wrench Calibration

- Torque wrench calibration confirms that the tool applies the intended amount of torque so fastening operations meet specification, reduce assembly errors, and improve reliability.

Industries that Use Calibration Services

The automotive industry is one of the broadest users of calibration services because vehicle design, testing, manufacturing, and inspection all depend on precise measurement. Engineers and technicians measure aerodynamics, weight, pressure, stress, strain, torque, speed, temperature, electrical values, fuel system performance, emissions, and ride quality with tools that range from handheld gauges to wind tunnels, dynamometers, and large-scale test systems.

Beyond automotive work, calibration services support electronics, aerospace, defense, meteorology, construction, medical manufacturing, food processing, utilities, research laboratories, energy production, and general industrial manufacturing. Buyers in these sectors often search for calibration companies that can deliver traceable documentation, fast turnaround, on-site service, laboratory capability, and support for the specific instruments used in testing, inspection, process control, and regulatory compliance.

Calibration Services Terms

- Accuracy

- A defined tolerance limit that establishes the allowable deviation between the measured output of a device and the true value of the quantity being measured. Ensuring high accuracy is important for reliable data collection and maintaining precision in various applications.

- Alignment

- The process of making precise adjustments to a device or system to bring it into proper operational condition. Proper alignment ensures optimal performance, reduces wear and tear, and improves measurement consistency.

- Analog Measurement

- A measurement system that generates a continuous output signal corresponding to the internal input. Unlike digital measurements, which produce discrete values, analog measurement provides smooth, uninterrupted readings that reflect real-time changes in the measured parameter.

- Axial Strain

- A type of deformation that occurs along the same axis as the applied force or load. It measures how much an object stretches or compresses in the direction of the force, providing insight into its structural integrity and performance under stress.

- Calibration Curve

- A graphical representation that compares the output of a measurement device to a set of known standard values. This curve helps determine the accuracy of an instrument and assists in making necessary adjustments to ensure precise readings.

- Calibration Laboratories

- Specialized facilities or companies that provide professional calibration services for instruments, gauges, and sensors. These laboratories use highly accurate reference standards to verify and adjust measurement devices, ensuring compliance with industry regulations and quality control requirements.

- Capacitor

- An electronic component that stores and releases electrical energy in a circuit. Capacitors are used to regulate voltage, filter signals, and store energy for short-term power supply needs in various electrical and electronic applications.

- Compensation

- A method of correcting or minimizing known errors in a measurement system by using specially designed devices, materials, or computational techniques. Compensation enhances the accuracy and reliability of readings by counteracting the effects of external influences such as temperature changes, mechanical stress, or electrical interference.

- Equilibrium

- A stable condition in which all opposing forces or influences are balanced, preventing any net change in the system. Equilibrium is important in physics, engineering, and chemistry, ensuring that processes function predictably and efficiently.

- Fatigue Limit

- The maximum amount of cyclic stress or strain that a material can endure without experiencing structural failure over an extended period. Knowing the fatigue limit of a material helps engineers design durable and long-lasting components for mechanical systems and structures.

- Hertz (Hz)

- A unit of frequency that measures the number of cycles or oscillations per second in a periodic waveform. Hertz is commonly used in the study of electrical signals, sound waves, and electromagnetic radiation to determine signal properties and behavior.

- K-Factor

- A value that represents the harmonic content of an electrical load current and its impact on a power source. The K-factor helps engineers assess the safe operating limits of electrical systems, reducing the risk of overheating and inefficiencies caused by harmonic distortion.

- Mean Stress

- The average stress level in a material subjected to repeated loading and unloading cycles. Mean stress is important in fatigue analysis, helping engineers predict how long a material can withstand repeated stress variations before failing.

- Metrology

- The scientific study of measurement, including the development of standards, methods, and instruments used for accurate quantification of physical properties. Metrology ensures consistency, reliability, and compliance with industry regulations across various fields, including manufacturing, healthcare, and research.

- Nonlinearity

- A condition in which the relationship between the input and output of a system deviates from a straight-line proportional response. In calibration, nonlinearity is expressed as the maximum deviation of a device’s output from an ideal linear response and is usually given as a percentage of the full-scale measurement range.

- Output

- The measurable result or signal generated by an instrument, sensor, or system in response to an input stimulus. The accuracy and stability of the output are important in ensuring reliable data collection and process control in industrial, scientific, and electronic applications.

- Range

- The span of values over which a measuring instrument or sensor can provide accurate readings without exceeding its operational limits. The range defines the minimum and maximum values that a device can effectively measure while maintaining precision and reliability.

- Resistor

- An electrical component that introduces resistance into a circuit to control current flow and voltage levels. Resistors play a major role in regulating electrical signals, preventing overloading, and shaping circuit behavior in electronic devices and power systems.

- Resolution

- The smallest detectable change in the measured value that an instrument can register. Higher resolution allows for finer measurement distinctions, making it valuable in applications requiring very precise readings, such as scientific research, medical diagnostics, and advanced manufacturing.

- Torque

- A measure of the rotational force applied to an object around an axis, determining its ability to rotate or twist. Torque is fundamental in mechanical systems, automotive engineering, and industrial machinery, influencing the performance and efficiency of engines, motors, and drive systems.